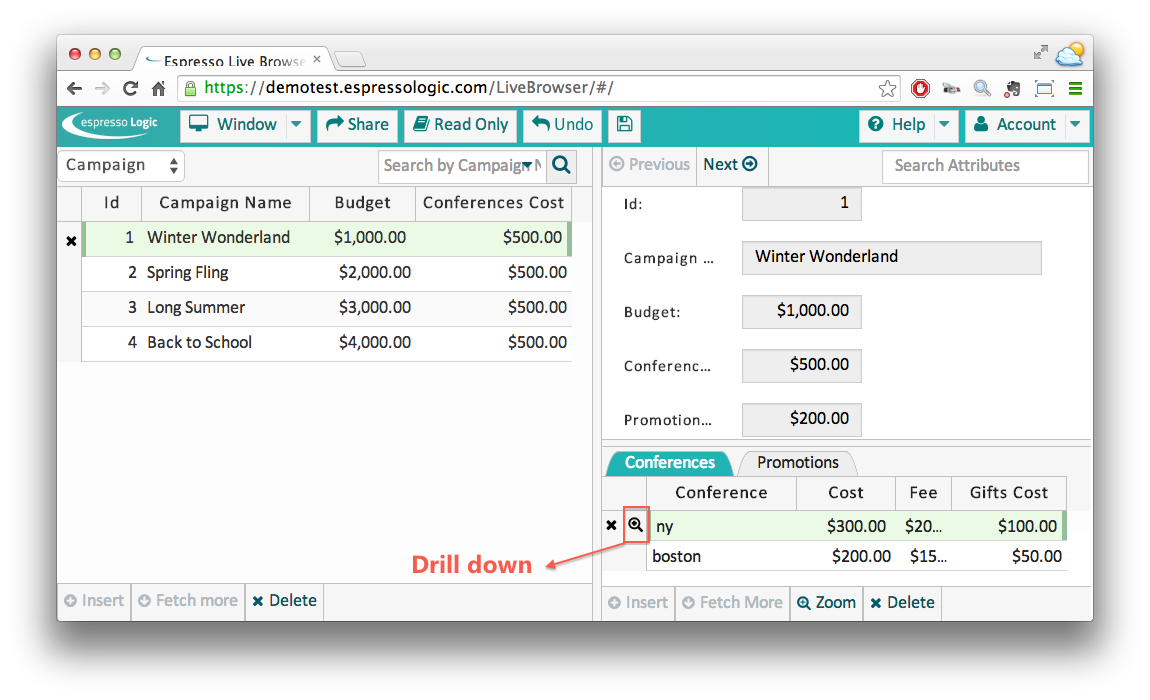

Since the screens are derived from the schema, they reflect changes to the schema – automatically. So, our new Cost attributes show up, as does the capability to “drill down” to see related Gift data:

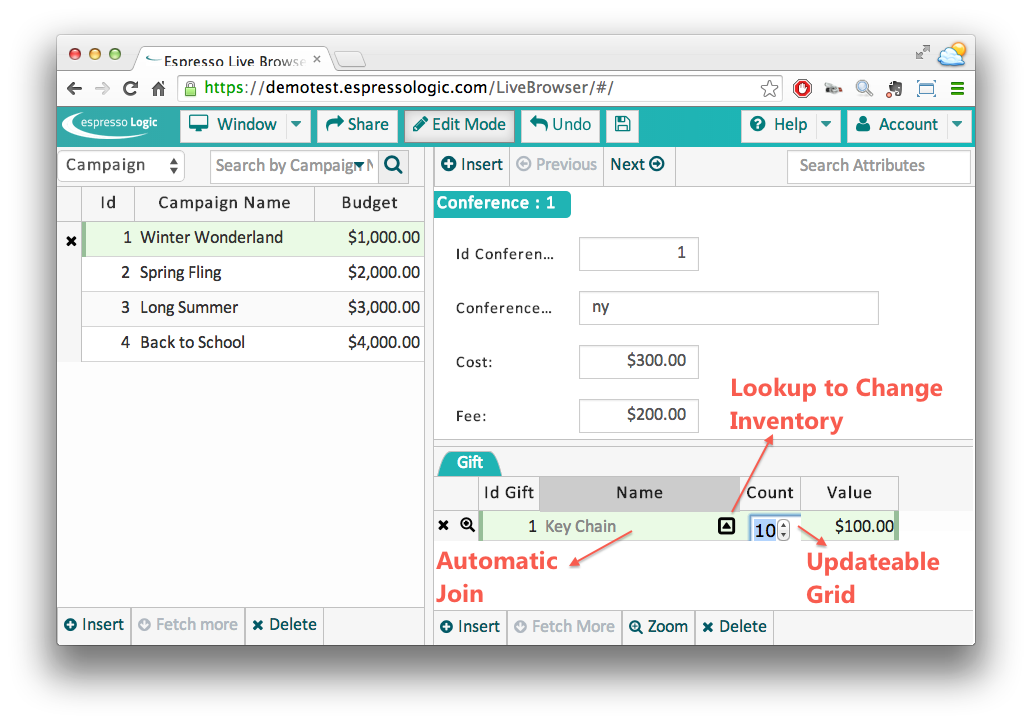

Updateable Grids and Foreign Key Lookups are standard patterns of data maintenance applications, and created automatically.

In the above diagram, the column Name actually displays the Inventory Name rather than the IdInventory, which is the foreign key. The system recognized this common pattern (numeric foreign keys, e.g., IdInventory), and created an automatic join to a more suitable column (the Name), automatically. This novel approach makes the app much more useful, out of the box.

Logic: Spreadsheet-like Reactive Expressions

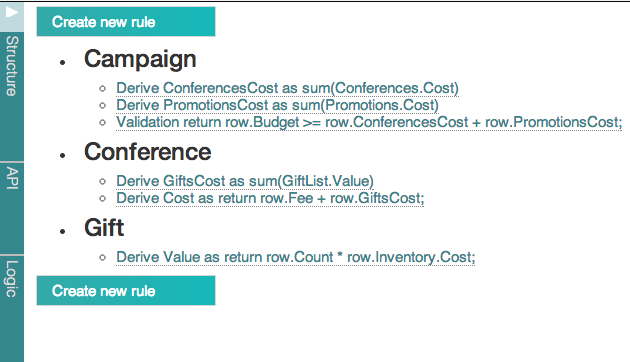

From above, we captured the following specification, clear to both IT and Business Users:

| What is meant by control budgets? |

Conferences Cost plus the Promotions Cost cannot exceed the Budget |

What is the Promotions Cost?

|

Sum of the Promotion Costs for that Campaign

|

| What is the Campaign Conferences Cost? |

Sum of the Conference Costs for that Campaign

|

| What is the Conference Cost? |

Fee plus the Gifts Cost

|

| What is the the Gift Value? |

Sum of the Gift Value |

| What is the Gift Value? |

Count * Gift’s Inventory Cost

|

But it is far more than a specification – the right column is executable Reactive Expressions for business logic. Let’s take a closer look at this meaningful new technology.

Update business logic is a significant element of the database systems. In a conventional approach, it is tedious to code, requiring significant amounts of change detection and propagation logic, SQL handling (with caching for performance), etc. And it is far too complex for business users, so does not facilitate collaboration.

We need to raise the abstraction level to make such logic substantially more expressive - simple and easy enough for Business Users to collaborate, yet powerful enough to address complex requirements. We’ve actually seen such a revolution in expressive power before: the spreadsheet. The core idea is remarkably simple and powerful: associate expressions with cells, and recompute when the referenced data changes.

Called Reactive Programming, we apply this spreadsheet paradigm to database transaction logic:

- Declare expressions to derive database columns (Campaign.PromotionsCost := sum(Promotions.Cost). These are exactly analogous to spreadsheet cell formulas.

Most interesting transactions are multi-table, so expressions must be able to reference related data. This enables - obligates - the system to take responsibility for not only the change detection, but also persistence (reading / writing related data, caching, optimizations, etc). Ideal implementations would provide flexibility in making aggregates stored (faster) or virtual (when the schema cannot be changed).

- Database updates are watched for changes in referenced values, which trigger reactions (e.g., adjustments) to the referencing value

- Validations (budget >- conferenceCost + PromotionsCost) are provided as well.

So, we simply enter this logic directly via a Browser-based GUI (this example requires no code, no IDE), which is then summarized for us like this (order is irrelevant, since the system orders execution by dependencies):

Such logic is fully executable. For example, as a Gift is entered, the Reactive Programming runs:

- Watches for the insert, and fires the value rule (retrieving the Inventory Cost)

- Watches the value - its change fires the rule to adjust Conference.GiftCost (the system figures out the sqls, caches as appropriate, etc). Such chaining (1 rule triggers another) enables simple rules to be combined to address complex transactional logic - just as in a spreadsheet

- Watches the GiftCost - its change adjusts the Cost,

- Watches the Cost – its change adjusts Campaign.ConferencesCost

- Watches the ConferencesCost, and checks the validation. If Budget is exceeded, the entire transaction is automatically rolled back, and an exception is returned to the user

Automatic Reuse: less work, more quality

Our created screens, of course, can be used for multiple Use Cases. Consider moving a Gift to a different Inventory item using the Lookup function mentioned above.

In “manual programming”, it’s not easy to spot all changes our logic must address. We might account for changes in Gift.Count, but overlook the implications for changes to Gift.InventoryId. This is a serious bug: compromised data integrity.

Reactive is different: the system watches for (all!) changes in referenced data, and takes the appropriate reaction. So we are already done: our Gift.Value rule accounts for the change to the Inventory Foreign Key.

This is a big idea: automatic re-use. By encapsulating our logic directly into the data model attributes (rather than coded for each specific Use Case), our solution has actually solved 15 Use Cases (add, change, delete for each of the 5 tables), without error. Not only is it less work, it’s higher quality.

Underlying Technology: REST, Mobile, JavaScript, Security

While this article focuses on driving analysis into working software, the underlying system architecture has several aspects that are attractive from a technology perspective.

REST

REST is used to communicate from the application to the server, for data access and logic.

REST is an attractive Web Services architecture that is network efficient, and an excellent basis for application integration.

Mobile

The application is JavaScript/HTML5, so runs on mobile devices like small form factor tablets or phablets.

JavaScript

While we focus here on declarative behavior, JavaScript is also available as required. JavaScript popularity is growing rapidly, as the one language acceptable to all mixed-technology teams. It provides a perfect way to address complex logic and application integration, such as sending messages to other systems, mashing up multiple services, etc.

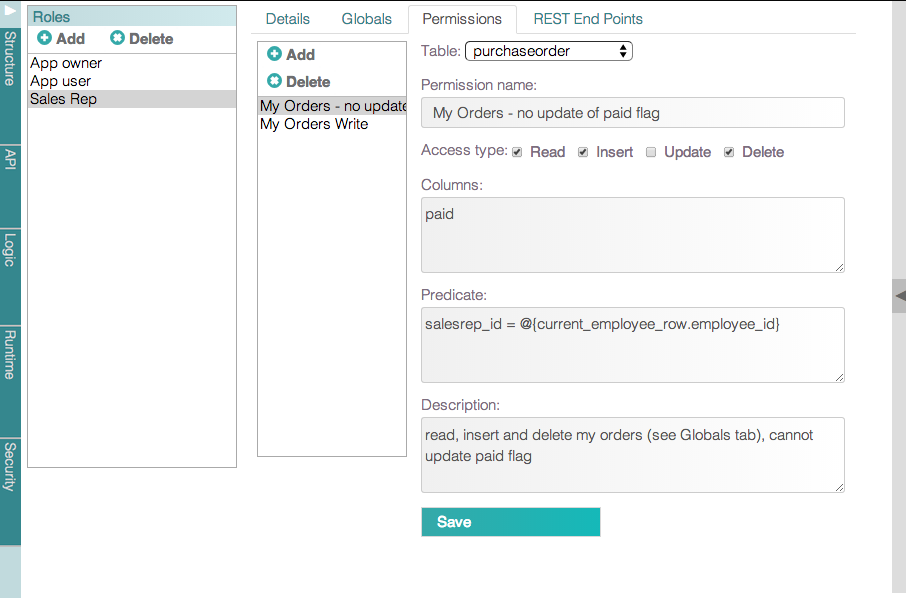

Security

Another key requirement is security. Beyond familiar endpoint access, the system must enforce security at the row and column instance level. For example, you might want Sales Reps to see only their own orders.

Many systems provide such functionality in views, but that leads to a proliferation of views to define and maintain. A better approach is to encapsulate the security into the table as a role-based permission (predicate, below), and ensure it is reused across Multi-Table Resources.

@{current_employee} designates a database row associated with a user, so that its columns (e.g., employee_id) can be used in filter expressions.

Summary

So, let’s recap what we've done:

- Developed initial schema.

- Created a working application with zero effort – just by connecting.

- Collaborated to gather data model changes and save behavior.

- Responded to changes, automatically for the screens, and with 6 rules for behavior. These mapped directly to our formalized analysis.

The Executable Requirements approach may have seemed simple - it is - but it belies that actual amount of work delivered:

- A complete web / mobile app

- Multi-table transactional logic behavior addressing 15 Use Cases, with assured re-use / quality, and transparent to Business Users

- An enterprise-class architecture, providing a REST-based platform for integration and Custom App Development

The entire process took under an hour - the logic required perhaps 5 minutes, the rest of the effort was data modeling, work required for any approach. By contrast, a conventional approach would have required weeks.

While designed for enterprise-class production, you may find considerable value in using it simply for prototyping and requirements clarification. Collaboration and rapid response to change has significant business value, in delivering to today’s requirements rather than yesterday’s misunderstanding.

But what may be the most exciting is that this technology empowers a significantly wider set of professionals to imagine a system - and deliver it in remarkably short timescales - than ever before.

Our company has been executing on this vision, and now provides the elements described above. If you’d like to check out this technology, there’s a free eval with zero install (it’s a service) that you can try on your own database. We also provide an in-premise version to address high-security requirements.

And we’re always eager to exchange views about the technology - get in touch!

Resources: